While planning glassfish 3.1 cluster deployment one of the major differences I found from an administrator perspective between cluster support in Glassfish v3.1 and Glassfish v2 is that 3.1 do not support node agent. Node agent is the process that controls the life cycle of server instances. Normally one node agent runs per box. The 3.1 release have SSH capability and that enables centralized management of a cluster. The functionality provided by a node agent is now can be performed by the use of SSH. Personally I found it more exciting than using node agent as SSH is very well established standard and it eliminates the dependency of a different process (node agent) in each node (this means I do not need to memorize the node agent related commands :) ) .

As we are using Windows OS in our application servers, so first thing that came into my mind was how to deploy the Glassfish 3.1 cluster, as it will need SSH to communicate (

it’s possible to deploy the cluster without using SSH also). Then my old rescuer Cygwin (

http://www.cygwin.com/) came into my mind, and yes we can deploy Glassfish cluster in Windows using Cygwin. In this blog post I will show you how to create a simple two node cluster with two instances in two Windows 2003 servers (servers are named Glassfish1 and Glassfish2) with the help of Cygwin.

The setup will be as shown in the diagram:

Install JDK:

Install JDK:

First I will install JDK (minimum supported is JDK 1.6.0_22) in all the servers.

Install Cygwin:

Install Cygwin:

After that I will install Cygwin, download it from

http://www.cygwin.com/ and run the setup.

The setup will ask to download the packages, for the first time I will download it from Internet and later I can use the downloaded files in other installations.

Select the root directory for cygwin installation.

Select a directory where the downloaded files will be stored.

Select the Internet connection type.

Select the site from where you will download the files, it’s wise to select a site close to your location.

Select the packages you want to install, here make sure that you have selected the SSH related packages.

Cygwin downloads the packages in the directory we have selected

After installation is completed, we have to setup the SSH server.

Enter the command

ssh-host-config in the cygwin command prompt and follow the onscreen instructions

After SSH setup is done, start the SSH daemon using the command

cygrunsrv –S

Once Cygwin is installed in each SSH node, we are ready to create our cluster.

Install Glassfish and DAS:

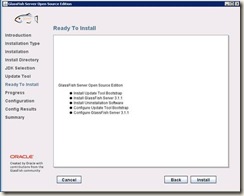

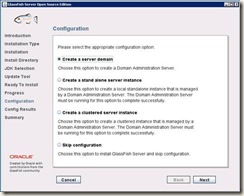

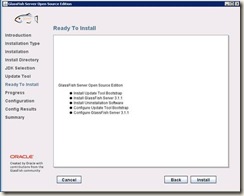

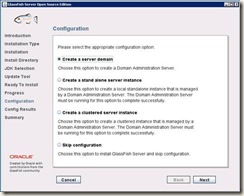

First I will install the Domain Admin Server (DAS) in my first server

Glassfish1, run the Glassfish 3.1 setup

Select Custom Installation

This installs my DAS server and creates the domain.

Optionally we can turn remote administration of the domain using the command

asadmin enable-secure-admin

This command enables remote administration and encrypts all admin traffic. If we are not using SSH, it enables to set up a remote instance on a different host from where the DAS is running.

Setup SSH authentication:

When a SSH client connects to a SSH service, it needs to authenticate the connecting user. So before starting our remote access, we have to setup SSH Authentication. Glassfish 3.1 supports three types of SSH authentication

1. Username/Password

2. Public key without encryption

3. Public key with passphrase-protected encryption

We are going to use Public Key Authentication without Encryption and for that Glassfish provides a command

setup-ssh. The

setup-ssh subcommand generates a key pair and distributes the public key file to specified hosts.

I will run the setup-ssh command from my DAS host Glassfish1 and it will setup SSH authentication with the remote host Glassfish2.

cmd> asadmin setup-ssh Glassfish2 Install Glassfish on remote node:

Install Glassfish on remote node:

First of all we have to install Glassfish in the server Glassfish2.

Two ways we can do the installation:

1. Install manually by running Glassfish setup on the server Glassfish2

2. Installing remotely from DAS server using the

install-node command. This command will create an image of the Glassfish installation of DAS and then install that image on Glassfish2 server.

I will use the second method in my example. So run the command in DAS

cmd> asadmin install-node –installdir D:/Glassfish3 Glassfish2This command will install Glassfish in d:\Glassfish3 directory in Glassfish2 server.

Create Nodes:

Create Nodes:

Now I will create the nodes of the cluster

There are two types of nodes

SSH nodes: An SSH node communicates over secure shell (SSH) and if we wish to administer the GlassFish instances centrally, then instances must reside on SSH nodes.

CONFIG nodes: This type of node does not support remote communication. If we want to administer our instances locally, then our instances can reside on CONFIG nodes. The DAS comes with one CONFIG node named

localhost-<domain name>; in our case it is

localhost-domain1. This node is already created and we do not have to create it.

As we already have one CONFIG node, I am going to create a SSH node in the second server Glassfish2.

Run the following command in DAS

cmd> asadmin create-node-ssh –nodehost Glassfish2 –installdir d:/Glassfish3 node2This command will create one SSH node named

node2 in the

Glassfish2 server. Here we have to specify the host on which the node will be created, Glassfish installation directory and the name of the node that will be created.

Both our nodes are created, we can see the nodes in the admin console. Here we can see the two nodes

localhost-domain1 and

node2.

Create the cluster:

Create the cluster:

So, at this point we are ready to create the cluster. In the admin console, go to Clusters and click on the

New button. Enter the name of the cluster and click OK to create the new cluster. Here we are creating a cluster named solar.

Create the instances of the cluster:

Create the instances of the cluster:

Now I will add the instances of the solar cluster. Go to

Clusters -> solar and go to the tab

Instances. Click on

New to add.

Enter the name of the instance and the node where this instance will reside. Here I will create two instances, one will reside in the local node

localhost-domain1 and second one will reside in

node2. The first instance I will create is

instance2 and it will reside on

node2.

Our instance is created and currently it is not running.

Creating the second instance

Once the both instance is created, start the instances and we are ready with our cluster and we can deploy our web applications in cluster.

Configurations that are to be done at the application side:

Change in web.xml file

<distributable/> element is to be added in the web.xml file. This tag signifies that the web application is suitable for running in a distributed environment i.e. in a cluster.

Addition on new elements in glassfish-web.xml:

Following elements are added to the glassfish-web.xml file

<session-config>

<session-manager persistence-type="replicated">

<manager-properties>

<property name="relaxCacheVersionSemantics" value="true"/>

<property name="persistenceFrequency" value="web-method"/>

</manager-properties>

<store-properties>

<property name="persistenceScope" value="session"/>

</store-properties>

</session-manager>

<session-properties/>

<cookie-properties/>

</session-config>

·

<session-manager persistence-type="replicated"> is added to specify the use of in memory replication in the cluster.

·

<property name="persistenceFrequency" value="web-method"/> specifies that after each request the session gets replicated.

·

<property name="persistenceScope" value="session"/> specifies that on each request the whole HTTPSession gets replicated in all the instances of the cluster.

· If in the application multiple client threads concurrently access the same session ID, then add

<property name="relaxCacheVersionSemantics" value="true"/> in the glassfish-web.xml file. This enables the web container to return for each requesting thread whatever version of the session that is in the active cache regardless of the version number. Otherwise without any instance failure you may experience session loss.

Application deployment in cluster:

Go to the cluster and go to the Applications tab. Click on Deploy button

Make sure that the Availability checkbox is enabled.

Also verify that in the Selected Targets list the cluster name is there.

Configuring the Glassfish cluster in Citrix Netscaler load balancer:

Configuring the Glassfish cluster in Citrix Netscaler load balancer:

Go to

Load Balancing -> Servers

Add both the physical servers (Glassfish1 and Glassfish2) in the load balancer. The Glassfish1 server of our example is named as

Solarex2 and Glassfish2 server is named as

SolarexCluster2

After adding the servers we have to add the services in these servers. The HTTP port for both our instances is 28080.

Go to

Load Balancing -> Services

Adding service for the first instance:

Adding service for second instance for the cluster

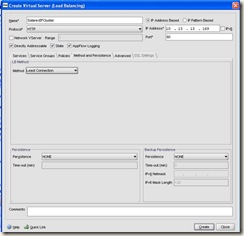

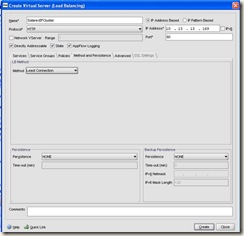

Once services for both the instances are created, I will create the virtual server for my glassfish cluster

Go to Load

Balancing -> Virtual Servers

I am going to name this virtual server as SolarexEFCluster, select both the services we have just created.

In the

Method and Persistence tab, select the load balancing method as per the requirement, in my case I have selected the

Least Connection method.

Now final task is to forward the client requests from our firewall to the load balancer virtual sever we have created.

So that’s all we need to do, and our Glassfish 3.1 cluster is ready for use :).